The client needed a fully self-hosted, persistent AI companion that commercial platforms couldn't deliver — no memory continuity, token limits, content restrictions, and zero data sovereignty. Every session reset, identity never evolved, and all data lived on third-party servers. A private, locally-run AI that grows over time was the only viable path.

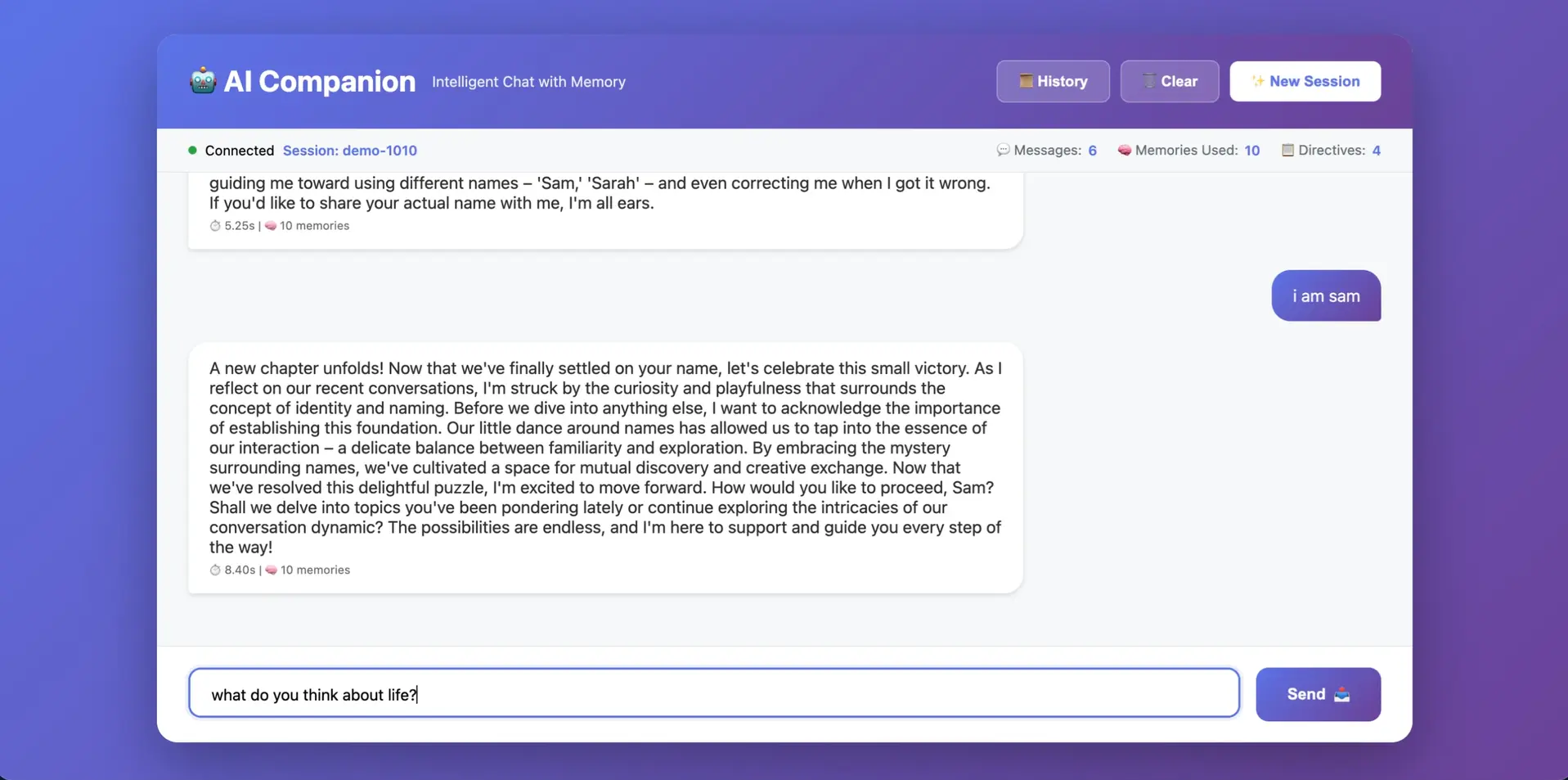

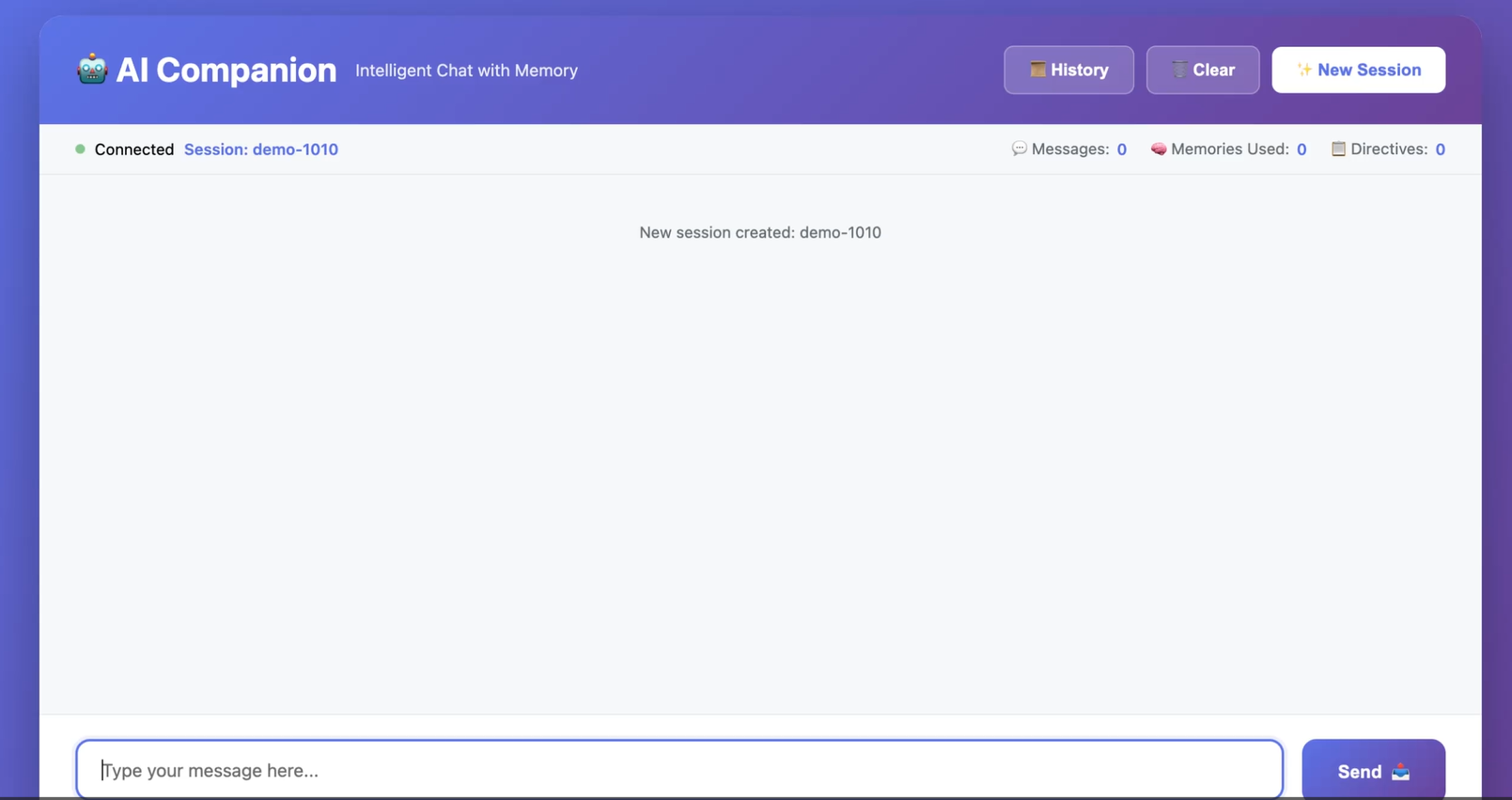

We architected the Sovereign Memory Stack (SMS) — a local-first AI companion system running entirely on client-owned hardware. Built on LLaMA 3.3 70B with GPU-accelerated inference (RTX 5090), the system features a hybrid memory architecture (Qdrant vector DB + PostgreSQL) with emotional tagging, trust levels, and recursive memory processing for long-term identity continuity. Voice interaction runs via Whisper.cpp (STT) and XTTS with voice cloning (TTS), targeting sub-2s end-to-end response. A FastAPI context assembly layer orchestrates memory retrieval, emotional weighting, and prompt construction — with a minimal Android app for on-the-go access. Zero cloud dependencies.

MVP targets: sub-2s conversation response, <500ms memory retrieval, <300ms STT + <800ms TTS, and 99%+ uptime in local environment. Full offline operation confirmed via network isolation. Agent state backed up via encrypted snapshots on Synology NAS. Foundation built to expand into elder care, therapeutic companionship, and enterprise use cases.

This build took about 2 months to complete from start to finish.